Artificial Intelligence in the manufacturing sector - Gnutti Carlo Spa case study

THE Ò-BLOG

AI Observatory 2020/2021 – Business Case

9 March, 2021

Reading time: 6 minutes

As a partner of the AI Observatory, we had the opportunity to present one of our most significant case studies at the Politecnico di Milano (you can read it here: Osservatorio Artificial Intelligence – Business Case – Gnutti Carlo Spa).

It was the occasion to disprove some myths and false beliefs regarding the development of artificial intelligence projects in the manufacturing sector, in particular computer vision projects, which also emerge from the Observatory’s research report (you can read our analysis here: our stories – AI Observatory 2020/2021).

The Italian computer vision market is a constantly developing segment, but only 30% of the companies interviewed have a project in progress, of which only 12% are in the production phase, while the remaining 18% are in the PoC or preliminary phase.

According to the data of the Observatory, the preparation of the dataset – in order to have data of good quality and in adequate quantity – is complex: 65% of the interviewed companies consider highly critical the phase of data integration, preparation and labelling that becomes one of the impediments to start up an AI project. In addition, 59% of respondents consider the construction of a business case as one of the main issues due to the uncertainty of the output that affects the definition of requirements, costs and benefits.

MYTH #1: Creating a dataset is a long and expensive activity

The creation of the dataset to train and validate a Neural Network is a fundamental step in an Artificial Intelligence project and is also one of the obstacles that limit the diffusion of AI systems in our companies.

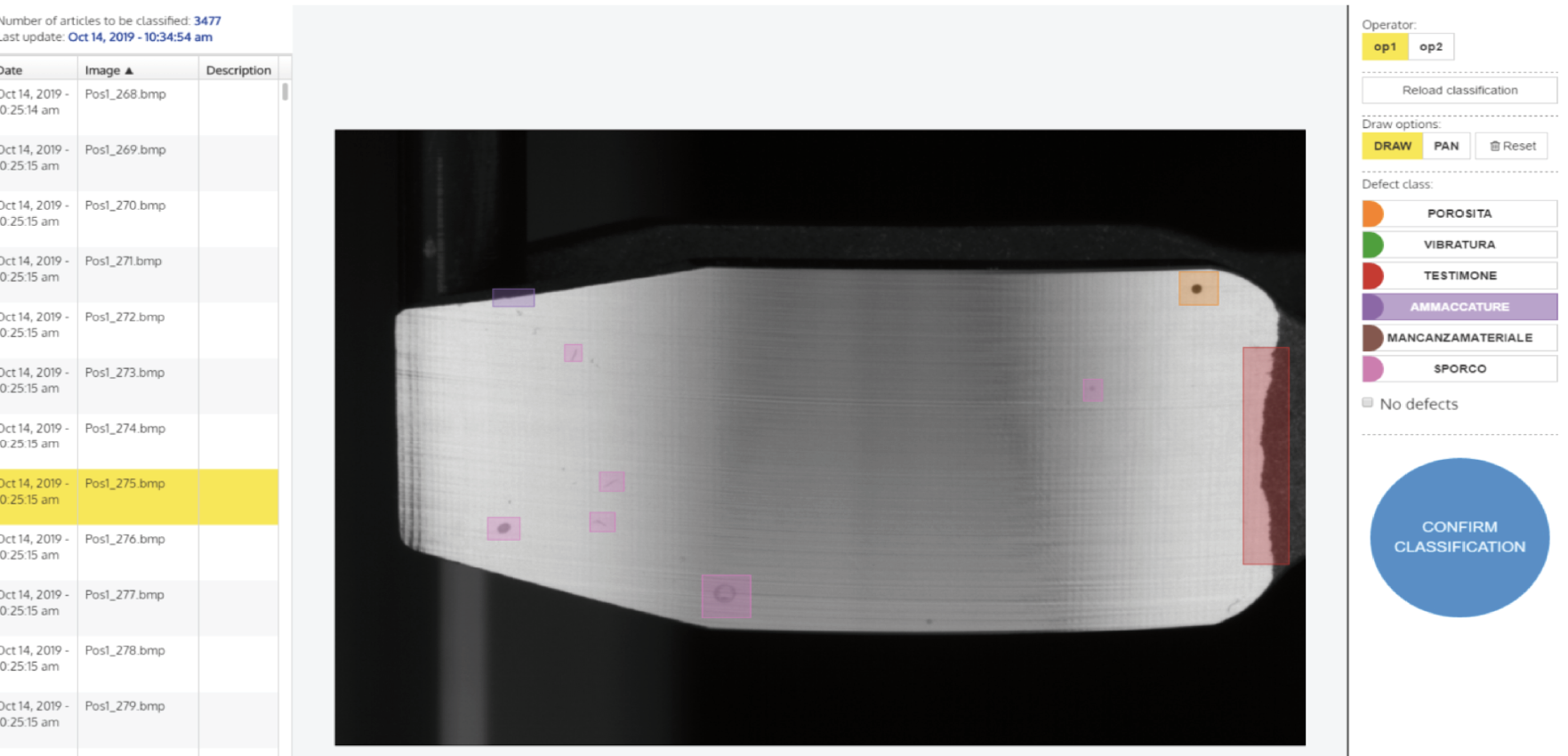

In this business case (you can also find it here: our case histories – Defects detection, automotive), we provided the customer with a labelling tool, i.e. an intuitive and easy-to-use software that allowed to visualise the 1400 images already available, to contour the defects present (in technical term, to segment) and to divide them in several categories (in technical term, to tag them).

This is usually a very time consuming operation that the tool allowed to reduce to 10 man-hours for the first iteration and another 4 man-hours for the second one.

So, how to make dataset creation faster and more effective?

Here is our method:

1. providing the client with an annotation system that allows to visualize, label and save the data, so that they are automatically made available to the neural network;

2. refining the dataset according to the information coming from monitoring the model in production: in fact, by analysing the uncertainty in the judgment it is possible to plan targeted annotation campaigns to provide the network with the necessary data to improve its performances, going straight to the point on the cases that the model manages with more difficulty.

MYTH #2: It’s difficult to define and measure time, costs and benefits of an AI project

Those involved in evaluating the feasibility of artificial intelligence projects must first recognize their multidisciplinary nature, which makes them cross between the IT, the production and the organizational domains. Involving the right people in the definition of requirements and evaluation KPIs is the key point to start from.

You need to be ready to close projects when no clear benefit emerges from the early stages, and in the same way, you need to have the vision to move to production as soon as possible to deal with the variability of the field.

In this specific business case, the project with Gnutti Carlo Spa took 6 months of work and goals and KPIs were immediately set and shared with the customer.

First of all, we decided to develop a solution that would allow us to reuse the vision system previously installed, in order to valorize the investment already made, as well as the defect images dataset already collected.

Secondly, we decided to develop an algorithm that would make it possible to discriminate between bad and good parts, but also to classify defects by type (image classification) and to pinpoint anomalies so that the operator would be able to view them (image segmentation) in order to understand its cause and implement corrective actions across the supply chain.

The following are the reference KPIs set to evaluate the quality of the solution:

- the ROI, i.e. the return on investment, which was measured in the following way: during the validation phase, the algorithm was tested on a dataset of 10,000 parts judged as “waste” by the operators; 80% of the parts were re-classified as “good” by the AI (these are cases for which the operators give precautionary judgments) and put back on the market, thus making it possible to almost completely refund the investment for the AI solution.

- the detectability level: the algorithm is highly able to detect defects and discriminate between real defects and halos or dirt. During testing, only 5 pieces among the 250 that had conflicting opinions between the AI system and the operator were judged “false good”, but these cases are extremely uncertain even for experienced operators. No major defects have been missed by the system.

So, how to make time, cost, and benefit definition more effective?

Here's our method:

1. starting with a defined business need strongly perceived by the company;

2. involving in the project team the company figures involved in the process under consideration, so that all the departments are represented, from IT to production, to strategy;

3. moving to production as soon as possible in order to immediately face the variability of the field;

4. providing companies with tools to interact with the algorithms, from the training phase to inference in production

5. providing companies with tools for versioning data and models and support them in the definition of appropriate validation procedures;

6. providing companies with tools for real time monitoring of performances (visit invariant.ai section);

7. providing companies with automatic active learning tools to increase the performance of models over time.

8. accompanying our client, throughout the entire AI lifecycle, end-to-end, from problem set-up to deployment and monitoring in production.

Want to learn more about our case hisories?

Visit our case histories section.