Our first time at We Make Future

THE Ò-BLOG

“Who’s heard of PyTorch?”

9 June 2025

Reading time: 3 minutes

“Who’s heard of PyTorch?” Two hands went up. 😅

That’s how our CTO, Luca Antiga,, opened his talk at WMF – We Make Future. A start that quickly reminded us we weren’t on familiar ground: not in front of a crowd of developers or technical insiders, but surrounded by startups, creatives, digital activists, and innovators from all kinds of fields.

An unusual event for us – and a breath of fresh air.

We listened, met new people, exchanged ideas. And we challenged ourselves by sharing our approach to building AI for industry.

Inside our approach to building AI

We’d rather build AI than talk about it.

And we love building it alongside people who want to change things and create real value.

But telling the story right is part of the job, in fact, it’s the first step to making it real.

Being able to communicate the potential of AI, even beyond purely technical circles, helps break down the cultural barriers that still slow its adoption in industrial settings.

This is especially true in factories and plants, where every second counts, mistakes have real costs, and every innovation needs to prove its worth fast.

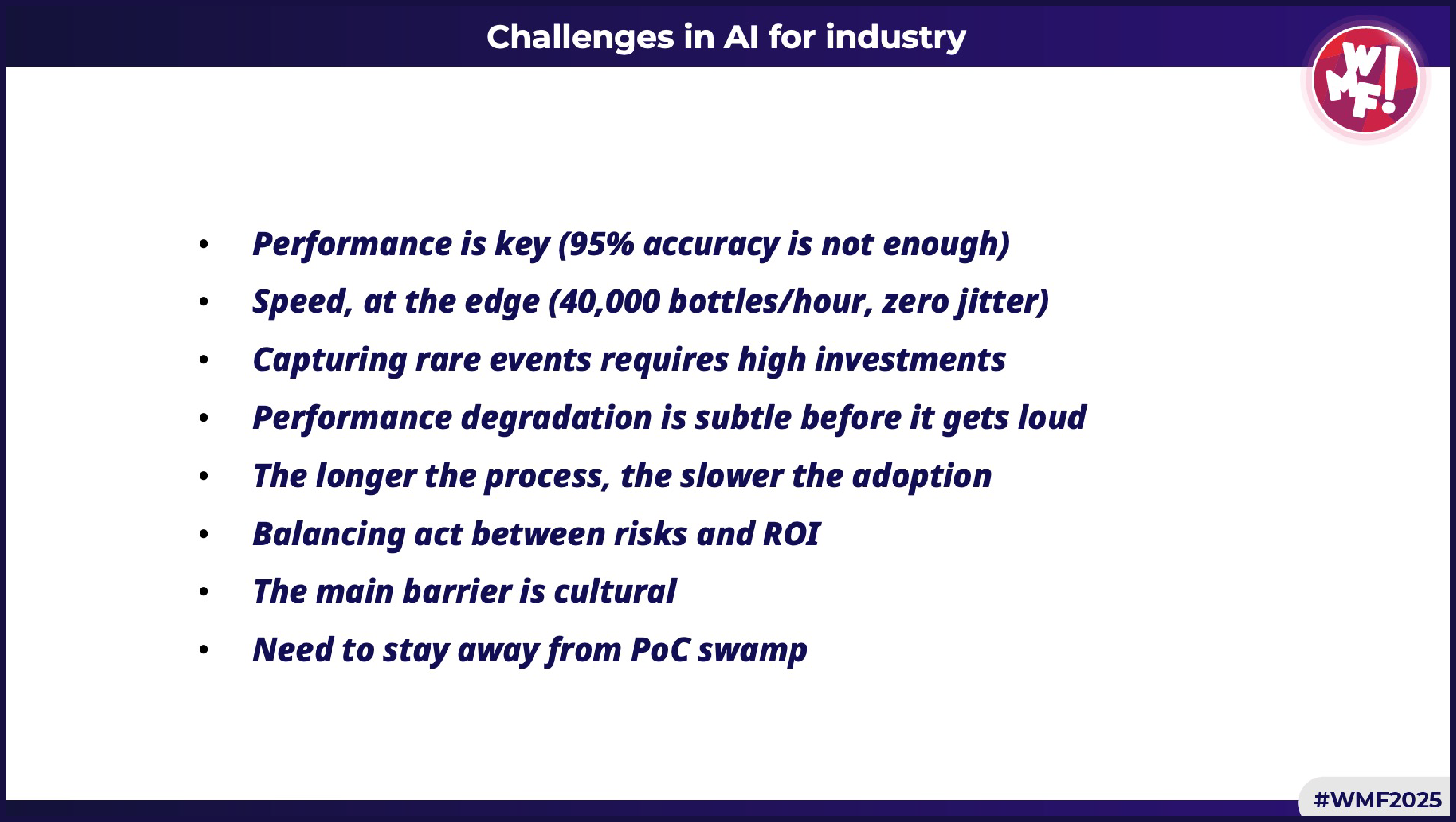

8 real challenges of Industrial AI

That’s where Luca started: the tough challenges we face every day building AI systems for real industrial environments.

-

- Performance is the key – 95% accuracy isn’t enough in an industrial setting: we need robustness, explainability, and long-term stability.

- Speed – AI has to keep up with production: think 40,000 bottles/hour, zero jitter.

- Rare events – They’re hard to detect and require huge effort in data and annotations, but that’s exactly what the model must learn to recognize.

- Performance degradation – Models first drift silently, then crash suddenly: you need monitoring to catch the early signs.

- Long processes = slow adoption – The more complex the process, the more patience (and trust) it takes to make AI deliver business value.

- Balancing risk and ROI – Every project is a trade-off between investment, expected impact, and perceived risk.

- Cultural barriers – Tech alone isn’t enough if people and processes aren’t ready.

- The PoC trap – Beware of proof-of-concepts that never make it to production!

3 paradigm shifts in 5 years.

For those who’ve been working in AI for years, it’s clear we’re no longer in the same phase we were five years ago. Luca walked through the key stages of this evolution, the same journey we’ve experienced at Orobix.

- Phase 1 – 2018: Train from scratch

Deep learning begins entering industrial workflows. Back then, we had already spent years deploying vision systems in production. It meant tons of annotated data, curated by experts. Small models, trained from scratch for every use case. Solid results, but at a very high cost.

- Phase 2 – 2022: Pretrained + fine-tuning

With the rise of Vision Transformers, pretrained models changed the game: fine-tuning with 20–30 images (okay, not always that simple, but the leap was real). Less human supervision, faster setup, more computational power. That’s the foundation of AI-go, our platform for training, testing, and deploying AI for visual inspection in manufacturing. Industrial AI, made real – as we like to say!

- Phase 3 – 2025 (already here): Promptable Multimodal Autonomous Agents

The arrival of GPT marked the beginning of a new phase: multimodal agents. Models that understand both images and text – and can answer questions even without being trained on rare events. You can show the model a good part, describe some common defects, and ask it to find them. No more long data collection campaigns. Delivery time drops from months to days. A complete shift in how we work.

But how does multimodal AI really work?

Multimodal AI combines text and images in a single model that can understand both. It works by splitting images into patches and converting them into image tokens, similar to words, using models like Vision Transformers. These tokens are then processed together with text by a model that learns to relate what it “sees” with what it “reads.” So, for example, you can show the system a reference part, give it a new one to inspect, and ask: “Find any anomalies such as cracks, surface defects, deformations, stains… and if you detect something, return an image with a bounding box on it.”

No need to train a new model each time: with the right prompt engineering, you can guide the system. It replies by combining visual and textual understanding, returning text, images, or both.

This is the principle behind Vision-Language Models (VLMs) like Qwen 2.5 VL, LLaMA 4, Gemma 3, Gemini 2.5, GPT-4o, Claude 4, and more. The result? Faster development, less data required, and more flexible applications – even in industry (with some care, of course… we’re not giving away all our secrets!).

So, what did we take away from WMF?

At WMF, we stepped outside our comfort zone. We brought industrial AI to a broader audience. We got questions, feedback, new perspectives. And most importantly: we confirmed something we’ve long believed. AI needs to be explained better – if we want people to truly understand the value it can deliver.

In the meantime, we’ll keep building it. Want to learn more? Drop us a line: 📩 info@orobix.com